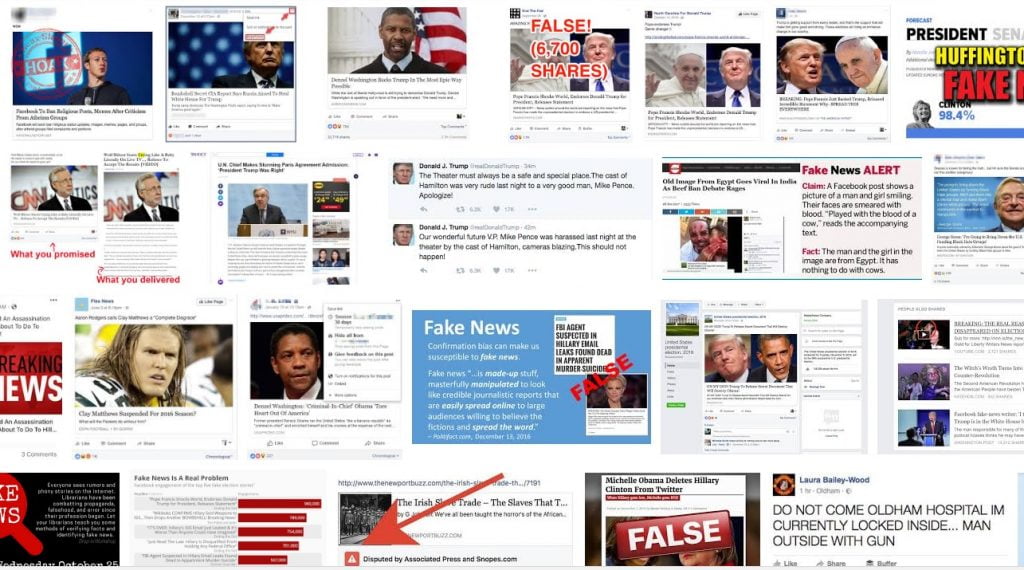

The term “fake news” has become a prominent part of our lexicon over the past two years. Defined as “false, often sensational, information disseminated under the guise of news reporting,” the expression was popularized by US President Donald Trump during his candidacy and then his presidency to accuse media of coverage he deems unfavorable or unfair.

However, it has also become widely used as a virtual weapon with which to attack – and even silence – opponents and has real-life consequences.

For example, just days after election day in November 2016, PepsiCo stock fell sharply when Trump supporters threatened to boycott the company for something that never happened.

Questionable websites claiming to be reporting objective news, a phenomenon which accelerated quickly during the election campaign, claimed PepsiCo CEO Indra Nooyi told Trump supporters to “take their business elsewhere” even though no such statement was made. These assertions, by sources such as such Conservative Treehouse and ThruthFeed, were only later debunked when Nooyi verified that she merely expressed her employees’ concerns over Trump’s election.

Fake news has not only resulted in some negative public image for companies and corporations but it has also cast doubt about the trustworthiness of the media and highlighted the role of big tech companies, like Facebook and Google, which have had to contend with criticism for how their platforms were used to disseminate fabricated information.

A January report showed that only 42 percent of US citizens trust the media, a decline largely attributed to the inability to distinguish between real and fake journalism.

Disinformation has also been used as a geopolitical weapon. In the 2016 US election, Russian hackers associated with the Moscow-backed Internet Research Agency were responsible for propagating distorted and fallacious reports on social media including Tumblr and Facebook. There have been many questions on what role these and other manipulations, such as the Cambridge Analytica data breach, have had in Trump’s victory.

Furthermore, China has mounted disinformation campaigns on Taiwan to undermine support for President Tsai Ing-Wen.

Amid the chaos, tech companies and entrepreneurs, including in Israel, have been trying to find solutions to these rising threats.

Last month, the annual Cyber Week conference at Tel Aviv University hosted a dedicated panel to fake news amidst the growing complexity in technical, political and sociological elements in false media campaigns. Alongside this dialogue, enterprises and individuals have found measures to eliminate troublesome fake sources.

Information: A double-edged sword

At Cyber Week, the panel Fake News: Influence Operations and Global Geopolitics proposed that although the digital age has given citizens more avenues to discover the truth, it has also provided parties an opportunity to launch a new type of attack – disinformation warfare. The talk covered a wide spectrum of topics including the definition of fake news, detriments of these campaigns and existing frameworks to discourage these attacks.

A key takeaway from the discussion was the need for societal awareness of these attacks. In an address to the audience, former dean of the Harvard Kennedy School of Government Joseph S. Nye cited the importance of increasing individual media literacy. According to Nye, a poll conducted by Pew Research Center indicated that most people are unable to distinguish between fact and opinion, which is crucial in preventing fake news from disseminating. If there is a prevailing attitude of skepticism toward opinionated media, cyberattacks are less likely to succeed, Nye explained.

Alongside this measure, governments should urge more parties to take responsibility for fighting false with real information, according to Dr. Michael Sulmeyer of the Harvard Belfer Center in a presentation. Sulmeyer encouraged governments to promote individual fact-checking and push multiple journalists to cover the same topic, giving readers factual information through referencing multiple sources. This would “combat trash with good, with accuracy,” he remarked.

Sign up for our free weekly newsletter

SubscribeOther speakers at the event included Dr. Eviatar Matania, the head of the National Cyber Bureau in the Prime Minister’s Office. He explained that through promoting general societal awareness and working with the national defense to develop infrastructure to neutralize sources of cyberattacks, the bureau can help prevent false information attacks.

“We should build a resilient population, which means we should educate people to try not to believe everything that they see. We need vibrant and independent media with different ideas and we need everyone to take responsibility — not just government but also corporations,” he said according to the Times of Israel.

Fake profiles: The source of the problem

Another method of false information resistance involves weeding out sources that generate fake news.

Cyabra, an Israeli media protection company, has developed a platform companies can use to identify profiles that have been created to generate fake news and manipulations. According to the company, real and fake profiles differ in the way they leave traces across social networks such as Facebook, Twitter and Instagram. For instance, fake accounts are often created immediately prior to an attack and uncharacteristically make a significant amount of connections in a short timespan. They also have larger-than-usual internet footprints. Cyabra’s technologies capitalize on these traits to recognize inauthentic sources of information.

In a telephone interview with NoCamels, founder Dan Brahmy further explained the technology. “We have gathered so far 100 different behavioral parameters that describe anomalies. We provide a grade that is based on AI and machinery based on the context of the attack… It’s a mix of understanding the content and the context of the attack and looking at the identity.”

Moreover, according to the company, traditional detection methods are inefficient, often using up to six days to detect false media outlets. Cyabra says its deep-learning and data-based algorithms can expose attacks in one or two days. In the interview, Brahmy explains that this distinction is critical, since it allows companies to maintain brand value, saving companies millions of dollars in revenue when products are marketed incorrectly or sold illegally.

The tech is said to be fairly accurate. “We are close to 90 percent in accuracy… We hope that in the next year thanks to our efforts and fundraising, we are able to get closer to 95 percent. That’s the goal,” Brahmy tells NoCamels.

Another innovative project on the frontlines of fake profile detection is research conducted by Dima Kagan, a Ph.D. student at Ben Gurion University of the Negev (BGU), Dr. Michael Fire, former BGU Doctoral Student, and Professor Yuval Elovici, director of Cyber@BGU. In a study published in March in the magazine Social Network Analysis and Mining, the researchers detailed their developed algorithm to detect fake users on social media.

SEE ALSO: Israel To Investigate Facebook Amid Cambridge Analytica Data Privacy Scandal

The program relies on the tendency of fake accounts to generate links to widespread communities in a random fashion, which is uncharacteristic of an authentic profile. In particular, it calculates the probability of a link existing based on variables such as language, workplace and mutual friends. If highly improbable connections exist based on these parameters, then that indicates a fake profile, according to the researchers.

“Most state-of-the-art methods focus on features from the point of view of the user, for instance, analyzing the user posts… Our method looks at a user as a set of relations with other users and assumes that even the best fake account will have enough suspicious connection to deviate from the norm,” Kagan tells NoCamels in an email interview.

Though the method only exists as an algorithm at the moment, Kagan says his research team hopes “that someday a social network operator will apply our method natively. Our method should work on any kind of social network and will get the best results when applied to the full social graph of the social network.”

Related posts

AI Insurance Company Cuts Prices As Drivers Cut Phone Use

Facebook comments